#73 - Your fraud system is dying

Most teams perceive fraud prevention as a 2D problem.

Why 2D? To represent the tension between two facets.

No, not how good it is in detecting fraud versus creating false positives. These are two sides of the same coin - accuracy.

It’s the tension between accuracy itself and coverage - how much of the fraud you actually catch.

But there’s another, hidden dimension that operates in tension with these two, making fraud a 3D problem.

Time.

How so? Accuracy and coverage aren’t static metrics. They change with time for every system as a whole, as well as for each component it is made of.

For some components, the degradation clock ticks faster, and for some slower. But it ticks for them all.

Today I’d like to talk about how to manage that 3rd dimension.

Why does every fraud system rot?

The principle behind answering this question is simple: fraud prevention is an adversarial discipline, where our opponent forever tries to outsmart our defenses.

Shocking, I know.

When we ship a fraud solution, we attribute some value to it based on its measured performance - how much fraud it blocked versus how many new FPs.

But these numbers start changing immediately upon go-live as fraudsters encounter the solution and start slowly eroding its effectiveness.

We already discussed how that might look when you consider your different rule categories.

This principle isn’t different for most other solutions you might employ:

ML fraud detection models degrade with time as mentioned in the article linked above.

Block lists are as static as they come and degrade quickly without constant updates.

Behavioral biometrics also degrade with new tools becoming available for fraudsters and need constant updates to their feature layer.

DocV/Liveness checks are only as good as the models that power them - and these are constantly under attack.

And I’m sure there are other solutions that escape my mind as I write this.

In fact, I can only think of two kinds of solutions that are somewhat immune to degradation.

The first one is Agentic AI, especially if the agent is running on “fresh” datasets, which is usually the case.

The second “solution” is, ironically, us humans.

Especially if we run on “fresh” datasets (our brains), which is usually the case.

Notice the distinction between the two solution groups? The first group runs automatically, and mostly in real-time, while the second group runs mostly (semi-)manually and as part of a back office process.

The more automated a solution is, the more its feedback loop is at risk.

And the more at risk the feedback loop is - the faster it rots.

Side note: We already discussed the concept of learning cycles in fraud prevention. If you read just one issue of TSFS in your life, read the one where we broke it down.

How to measure performance degradation

Simple and straightforward:

You measure degradation as you measure performance. You don’t have to reinvent the wheel.

Side note: Measuring accuracy of live solutions can be tricky. Here’s some reading on how to detect false positives. Similarly, here’s some reading on how to measure how much fraud you actually blocked.

The critical thing is to make sure you don’t only measure solutions when they go-live, but keep an eye on them throughout their lifecycle.

In that sense, the only question you need to ask yourself is how are you going to monitor each solution category (rules, models, etc.) - manually or automatically?

If possible, automated monitoring is always the preferred way to go, especially for complex fraud systems. If you can spin a couple of dashboards and set alerts to fire when metrics cross a threshold, it’ll make your life much easier.

Alternatively, you can always resort to a manual review that occurs periodically. How periodically? That really has to do with the solution type and use case.

How to detect performance degradation

The trickier question is how to detect unacceptable degradation.

As said, we do expect solutions to start degrading almost instantly.

But in order to be practical, we need to establish what threshold must be crossed and for how long before a solution is considered degraded.

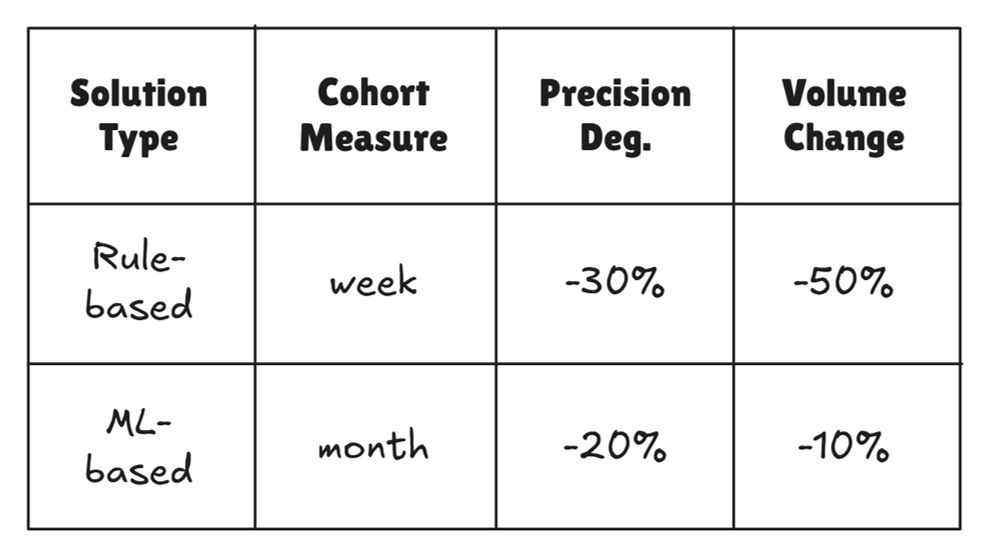

In the table below you can find some rules of thumb for how to approach it:

For example, rule-based solutions should be measured in weekly cohorts.

Don’t measure it WoW, as degradation often creeps in. Instead, always compare last week’s performance to the original performance at go-live.

The reason is simple: fraudsters don't bypass your defenses all at once. They adapt gradually, which means week-over-week comparisons can often look acceptable. Right up until they don't.

Anchoring to go-live is what makes slow erosion visible. If precision is down 30% or volumes are down by 50%, you may want to take a closer look.

Side note: How come ML-based solutions have lower thresholds? It’s mainly because they degrade slower, but also take more time to be assessed and refreshed. You want to catch meaningful changes early enough.

The bottom line

Many teams treat fraud detection as a 2D problem.

They measure performance at go-live, move on, and come back when something breaks. By then, the degradation has already cost them.

Adding time as a third dimension changes the game. It means treating every solution you deploy as a living system with a decay rate, and building a monitoring system that tracks it.

The good news? It doesn't require fancy tooling.

It requires discipline: knowing which solutions you own, what their baseline performance was at go-live, and whether they're still performing within acceptable bounds today.

Does your team review solution performance based on go-live measures? Would love to hear which metrics you’re looking at to know something’s up - hit reply and let me know!

In the meantime, that’s all for this week.

See you next Saturday.

P.S. If you feel like you're running out of time and need some expert advice with getting your fraud strategy on track, here's how I can help you:

Free Discovery Call - Unsure where to start or have a specific need? Schedule a 15-min call with me to assess if and how I can be of value.

Schedule a Discovery Call Now »

Consultation Call - Need expert advice on fraud? Meet with me for a 1-hour consultation call to gain the clarity you need. Guaranteed.

Book a Consultation Call Now »

Fraud Strategy Action Plan - Is your Fintech struggling with balancing fraud prevention and growth? Are you thinking about adding new fraud vendors or even offering your own fraud product? Sign up for this 2-week program to get your tailored, high-ROI fraud strategy action plan so that you know exactly what to do next.

Sign-up Now »

Enjoyed this and want to read more? Sign up to my newsletter to get fresh, practical insights weekly!

![Native[risk]](http://images.squarespace-cdn.com/content/v1/65704856a75e450a6d17d647/f3aa0ca7-8a4d-4034-992f-9ae514f1127e/Native+risk_logo-invert.png?format=1500w)